Prompt engineering is the process of designing and refining the instructions (called prompts) that you give to AI models so they can generate accurate, relevant, and high-quality responses.

In simple terms, it’s about learning how to “talk” to AI in a way that gets you the best possible results.

Think of it like working with a brilliant assistant: the clearer and more specific your request, the better the outcome.

That’s why businesses are now exploring prompt engineering services and AI prompt engineering consulting to train their teams and enhance their use of AI tools.

Originally, LLM prompt engineering was something only developers and researchers used. But today, it’s valuable for almost anyone: students, writers, marketers, or business leaders.

At its core, prompt design is about bridging human intent with machine intelligence. Let’s learn all about it in this detailed guide!

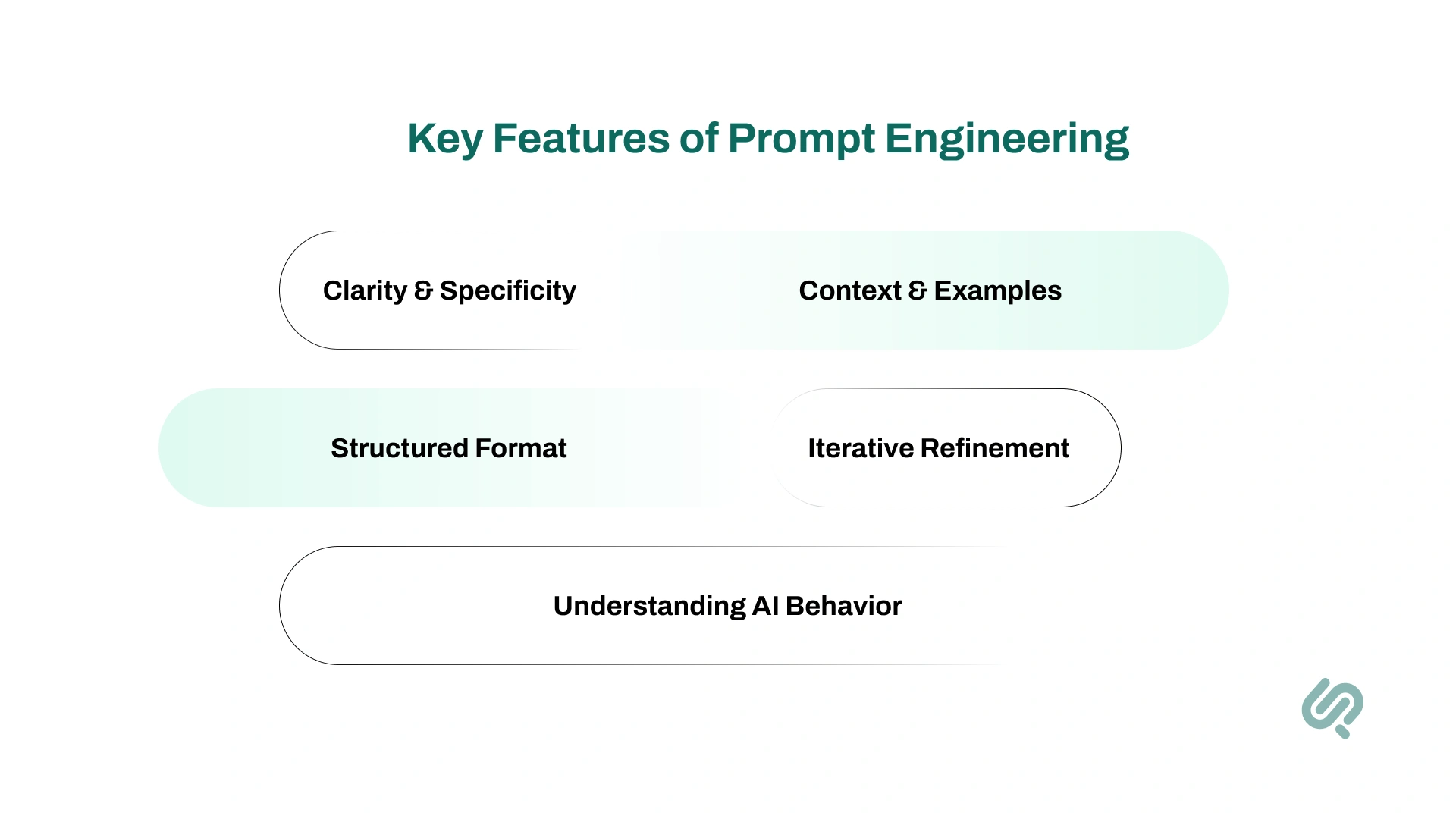

So, what makes a good prompt work?

These key features form the foundation of effective LLM prompt engineering and help you get consistent, high-quality results.

The clearer your prompt, the better the answer. Vague instructions confuse AI, while precise ones guide it toward useful results.

Example: Instead of “Summarize this”, ask “Summarize the following paragraph in one sentence, capturing the main idea.”

The more context you give, the smarter the response. Adding a role or defining the audience makes answers more accurate and relevant.

Example: Instead of “Give me advice,” ask “You are a career coach. Advise a 25-year-old web developer on AI career paths.”

Good design isn’t only about what you ask but how you want the answer delivered. You can request bullet lists, tables, JSON responses, or formal paragraphs.

Example: “Respond in JSON format with fields: title, summary, and keywords.”

Great prompts rarely work perfectly the first time. Experts and teams using prompt engineering services refine and test prompts using prompt engineering tools.

Example: The first prompt gives a vague summary, but after tweaking it to “Summarize the article in exactly three bullet points with one key takeaway each,” the AI delivers clearer, more structured results.

Skilled prompt engineers know how models “think.”

They adjust word choices, context length, and model settings like temperature. Using techniques such as chain-of-thought prompting and a well-structured hierarchy, they push the AI to perform at its best.

Example: Instead of “Solve this math problem,” asking “Explain your steps clearly and then give the final answer” guides the AI to show its reasoning.

At its heart, prompt engineering combines creativity with technical skill.

Successful prompt engineering relies on best prompt engineering techniques and tools like LangChain, OpenAI Playground, or Google Colab to test, refine, and scale prompts effectively.

Example: A prompt engineer might experiment with multiple versions of a customer support query until they find the one that consistently produces empathetic, accurate responses.

Prompt engineering matters because it makes AI truly useful. By giving clear instructions, you guide AI to deliver accurate, relevant, and context-aware answers. It transforms powerful models into practical tools for real-world tasks.

AI models like ChatGPT or DALL·E are powerful, but they don’t “know” what we want unless guided clearly.

Prompt engineering ensures AI understands context instead of producinOpenAI Playgroundg random or irrelevant answers. That’s the magic of AI and machine learning services.

This refinement is how LLM engineering turns broad queries into precise, useful outputs.

Effective prompting reduces trial-and-error and saves time. Studies show well-structured prompt can cut follow-up queries by ~20%.

Iterative refinement can improve correctness by ~30% and reduce biased/inappropriate answers by ~25% (1)

Organizations often rely on AI consulting to standardize prompts and scale accuracy.

For end users, prompt engineering makes AI responses feel coherent, relevant, and natural. It allows people to get accurate results without needing to craft perfect questions.

This improves customer service bots, chat apps, and other AI tools powered by prompt engineering services.

Businesses adopting AI are increasingly investing in prompt engineering services and advanced prompt engineering techniques.

The global prompt engineering market, valued at ~$222 million in 2023, is projected to reach ~$2.06 billion by 2030 (CAGR ~32.8%). (2)

Large organizations now form dedicated prompt teams, recognizing that prompt makes AI applications more efficient and effective.

With 78% of companies using AI in 2024 (up from 55% in 2023), the need for skilled prompt engineers is booming.

ChatGPT alone reached $10B ARR by mid-2025, showing how central generative AI has become. (3)

Prompt engineers bridge the gap between end users and large language models, ensuring AI delivers real-world business value.

Prompts shape the way we interact with AI and influence the quality of responses. Below are 7 key types of prompts, each explained briefly with an example.

These are the simplest prompts; you just instruct without any examples. The AI relies only on its training to generate an answer.

Example: “Translate ‘Hello’ into French.”

Here, you provide one example along with the task so the AI understands the expected pattern. This makes the results more accurate than zero-shot.

Example: “Translate English to French: Cat → Chat. Now translate ‘Dog.’”

In this type, you include several examples of input and output to guide the AI. This helps the model mimic the structure or style you want.

Example: “Translate English to French: 1. Hello → Bonjour, 2. Thank you → Merci. Now translate ‘Good morning.’”

These prompts ask the AI to explain its reasoning step by step before giving the final answer. They’re especially useful for math, logic, and multi-step tasks.

Example: “Solve 25 × 4. Show your steps clearly before giving the final answer.”

You assign the AI a role or persona to shape tone and style. This makes the output more relevant for the intended audience or situation.

Example: “You are a financial advisor. Explain investment basics to a beginner.”

These prompts add clear rules like format, tone, or length. By setting constraints, you reduce vagueness and make the response more structured.

Example: “Summarize this article in 3 bullet points, each under 10 words.”

Instead of asking for everything at once, you break the task into smaller steps. Each prompt builds on the previous one, producing a more detailed final output.

Example: First: “Outline a blog about AI.” → Then: “Expand each section into 2 paragraphs.”

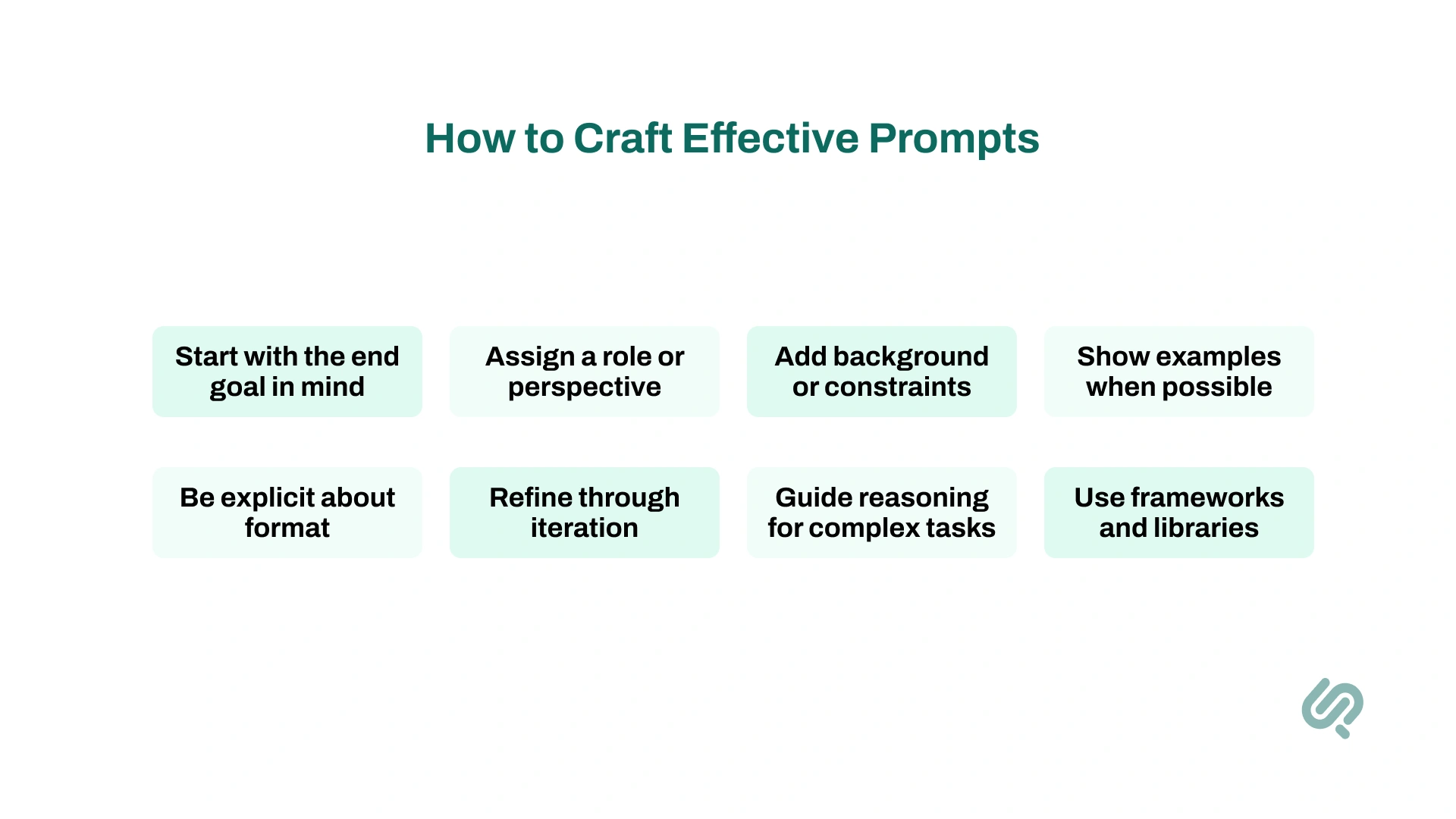

Crafting a good prompt is like giving directions. You’ll only get to the right destination if your instructions are clear and well thought out.

Here’s a practical approach to writing prompts that consistently deliver strong results:

Think about what you want the AI to produce before you type the prompt. Is it a summary, a list, an explanation, or a piece of creative writing? Having the output format in mind helps you shape your request.

Give the AI a persona to guide tone and style. This makes outputs more natural and context-aware.

Help the AI focus by adding details like audience, region, or time frame.

Demonstrate the structure you want through examples. This “few-shot” method boosts accuracy and makes results more predictable.

If you need a bullet list, JSON, table, or structured code, spell it out in your prompt.

Don’t expect the first try to be perfect. Adjust wording, break down tasks, or add detail until you get the result you want.

For multi-step or logical problems, ask the AI to explain its thought process. This reduces errors and improves reliability.

Instead of reinventing the wheel, use resources like open-source prompt libraries, LangChain, or the PARSE framework (Persona, Action, Requirements, Situation, Examples)

To see the impact of good prompting, let’s look at a few real scenarios where a vague request is transformed into something powerful with prompt design.

Why It Works: Adds persona, audience, context, length, and purpose. The AI now produces a focused, age-appropriate tale.

Why It Works: Defines the audience, topic, and length, and requires examples. This ensures clarity and accessibility.

Why It Works: Supplies data, defines the task, and specifies the output format. The AI can now complete the task step-by-step.

Why It Works: Rich descriptive details guide the AI to generate a unique, interesting image.

Why It Works: Clearly defines the language, functionality, and requirements. This results in usable, production-ready code.

Prompt engineers rely on a growing ecosystem of tools to design, test, and refine their work.

These resources make it easier to scale, collaborate, and improve prompts while keeping outputs consistent.

Platforms like OpenAI Playground, Hugging Face’s Transformers, and Google’s Vertex AI let users experiment with prompts on different large language models (GPT-4, Bard, LLaMA, etc.).

They provide control over settings such as temperature, max tokens, and output length, helping engineers understand how small changes affect results.

These interactive sandboxes are often the first step in learning effective prompt hierarchy.

Frameworks like LangChain, PromptLayer, and PromptPad allow prompt engineers to organize, chain, and integrate prompts directly into applications.

They come with built-in helpers for tasks such as few-shot formatting, JSON parsing, and structured outputs.

Such libraries are essential for applying advanced techniques in real-world systems.

Tools like PromptFlow, PromptPerf, DeepEval, and Latitude are designed for A/B testing, logging, and performance tracking.

These services make iterative refinement possible at scale by helping teams measure accuracy, detect bias, and optimize prompts continuously.

Enterprises often build internal prompt libraries or repositories of vetted templates that capture best practices for repeatable success.

Teams increasingly document prompt strategies using Git, Notion, or Confluence.

These platforms store structured prompt hierarchy (system messages, user prompts, follow-ups) and share guidelines across teams.

For companies adopting AI at scale, this documentation is as valuable as codemaking, and shared knowledge bases are critical.

Once you’ve mastered the basics, there’s a whole world of advanced engineering techniques that take AI outputs to the next level.

These methods are used by skilled practitioners to handle complex tasks, improve accuracy, and make AI more reliable in real-world applications.

Adding cues like “Let’s think step by step” encourages the model to show its reasoning process. Especially useful for math, logic puzzles, or multi-step planning.

Turns vague or incorrect answers into accurate solutions by making the AI “explain its work.”

Instead of asking the AI to do everything in one go, break the task into smaller steps.

Example: First ask for an outline, then expand each point into detailed sections.

Mimics a developer’s pipeline and often boosts productivity by structuring outputs across multiple prompts.

Provide 1–3 examples in the prompt so the AI understands the pattern.

Example: For sentiment analysis, show a couple of labeled examples, then give the new sentence.

A classic prompt engineering strategy is still highly effective with GPT-4 and beyond.

Careful prompt design includes splitting long inputs into smaller chunks.

Adjusting randomness controls like temperature or top-k/top-p sampling helps refine outputs.

Small changes in formatting or parameters can dramatically improve precision.

In specialized fields (medical, legal, finance, technical), prompts must include domain language and context.

Example: A healthcare prompt might say: “Use medical terminology and follow standard clinical guidelines when explaining the diagnosis.”

Ensures the AI applies knowledge within the correct professional boundaries.

Advanced prompts add guardrails to reduce the risk of harmful or biased outputs.

Example: “Avoid giving legal advice” or designing test prompts that check for unwanted bias.

While not perfect, this helps align AI with ethical and compliance standards.

Many engineers combine prompt engineering tools with coding and external APIs.

Example: “Write Python code to analyze this dataset and generate a bar chart.”

Blends AI responses with software engineering workflows, powerful for data analysis, visualization, and automation.

Some of the most useful advantages of prompt engineering are:

Naturally, there are some disadvantages too:

Prompt engineering is still a relatively new discipline, which means practitioners face a unique set of challenges:

Large language models can behave inconsistently. A prompt that works well today might deliver a different response after a model update or when used with a different AI system.

Small wording changes often lead to drastically different outputs. This makes prompts feel “fragile” and requires constant testing and refinement to achieve reliable results.

What works for GPT-4 may not work for Bard, Claude, or LLaMA. Engineers must adapt prompts for different systems, which increases complexity.

Because the field is young, there are no universally accepted guidelines for prompt design or prompt hierarchy. Teams often create their own playbooks, leading to inconsistencies.

Without clear guardrails, prompts may unintentionally produce biased, misleading, or unsafe outputs. This challenge makes AI prompt engineering consulting crucial for organizations handling sensitive or regulated data.

Despite these challenges, the outlook for prompt engineering is promising. Several trends point toward its rapid growth and formalization:

Advanced models like GPT-4 and GPT-4o solve more problems “out of the box.” However, complex and industry-specific tasks still require advanced techniques to get precise results.

The industry is moving toward formalized standards such as design patterns, certifications, and structured workflows. Courses, guides, and conferences on LLM prompt engineering are already available.

Prompting is merging with software engineering. APIs, frameworks, and open-source projects like GPT-Engineer now include prompt optimization features. Techniques such as prompt-tuning blur the line between writing prompts and training models.

With AI regulation on the rise, prompts will be audited for compliance and ethical use. Guardrails like “Avoid medical advice” or “Filter sensitive content” will become standard. Businesses will increasingly rely on prompt engineering services to keep outputs safe and trustworthy.

Everyday tools, chatbots, productivity apps, and voice assistants will embed prompt engineering tools under the hood. Non-experts will benefit from “smart prompts,” while expert engineers will still refine and apply specialized techniques for advanced use cases.

Prompt engineering is the bridge between human ideas and AI responses.

With the right prompts, you can turn complex models into simple, helpful tools that solve real problems.

Whether it’s for writing, coding, analysis, or customer support, learning prompt engineering helps you get the most out of AI.

As this field grows, it’s clear that crafting better prompts isn’t just a skill. It’s the foundation of working effectively with AI in the future.

Prompt engineering is the practice of writing clear instructions for AI models so they give accurate, relevant, and high-quality responses.

A strong AI prompt usually includes:

A prompt is the instruction you give to an AI. Example: Instead of “Summarize this,” say “Summarize this article in three bullet points highlighting key insights.”

Begin by practicing with tools like ChatGPT or OpenAI Playground. Focus on writing clear, detailed prompts, experiment with formats, and refine until you get consistent results.

Yes. It’s a growing field with rising demand. Many companies now hire prompt engineers or invest in AI prompt engineering consulting, with salaries averaging over $120K in the US.